AI and emotional advice: are we worried about the wrong group?

“You can tell me anything.”

That’s the kind of line you’d expect from a friend or a trusted adult. It’s also something an AI chatbot can say instantly, without hesitation. And that’s where much of the current concern begins.

Students using AI for advice, particularly emotional advice, has quickly become a talking point. The idea that something which sounds human, but isn’t, is now part of how young people think things through raises understandable questions. Reporting from Tes and warnings from the British Association for Counselling and Psychotherapy (BACP) highlight real risks, particularly where advice may be inaccurate, oversimplified, or lack appropriate follow-up.

Recent findings from Internet Matters reinforce why this concern has gained traction. Their report highlights how AI chatbots are becoming a go-to for many young people, often used without much oversight and, in some cases, replacing more traditional sources of reassurance.

So far, so familiar. But there’s a risk that the conversation stops there.

Because when you look more closely at how students are actually engaging with AI, the picture becomes less clear-cut, and perhaps a little less alarming than we might expect.

- It’s not as clear-cut as that

- The version of the story we keep hearing

- There’s another possibility

- That doesn’t mean there isn’t a risk

- What schools can actually do

- It’s not really about AI

- A more useful place to start

- So where does that leave us?

- How VotesforSchools can support

It’s not as clear-cut as that

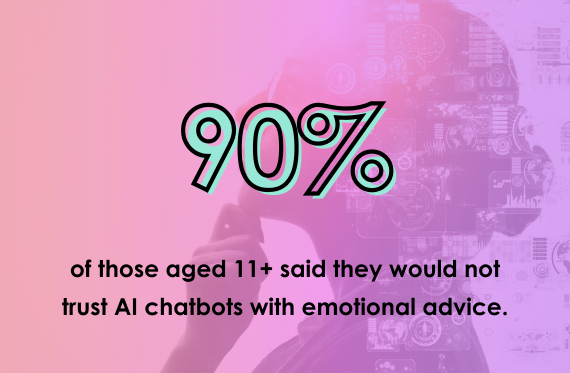

Spend a bit of time listening to how students talk about AI, and the tone is rarely extreme. They don’t all trust it, and they don’t all dismiss it either. Most sit somewhere in the middle, and move between positions depending on the situation.

Students will often acknowledge what AI is good at. It’s quick, it’s available, and it doesn’t judge. But that’s usually followed by a shift. In the same conversation, they question whether it really understands anything, whether the advice applies to them, and what happens if it gets things wrong.

This kind of mixed response comes through in our recent discussions on the topic too. Students rarely land neatly on one side. Instead, they weigh up usefulness against limitations, often recognising both at the same time.

There’s a noticeable awareness that something can sound helpful without necessarily being reliable. That distinction matters, and many students are already making it.

The version of the story we keep hearing

If you look at how this is being discussed more widely, the tone is different. Coverage from Tes focuses on safeguarding risks, particularly where students may confide in chatbots instead of people. The BACP has also raised concerns about the quality of advice and the absence of real understanding or follow-up.

None of that is wrong. But it does lean heavily in one direction.

It reinforces the idea that young people are especially vulnerable to AI - that they might trust it too quickly, misunderstand it, or rely on it in place of real support. That may be true in some cases, but it’s not the whole picture.

There’s another possibility

It’s worth asking where this certainty comes from. For many adults, AI has arrived quickly, with little time to explore or question how it works. Most are figuring it out independently, without structured opportunities to test or challenge it.

Research from Ofcom and the Pew Research Center suggests that adults are often less confident evaluating digital information and may overestimate how accurate AI-generated responses are.

At the same time, students are increasingly encountering AI in environments where they are encouraged to question, discuss, and form a view. That contrast is difficult to ignore.

The group we’re most concerned about may also be the one with more opportunities to think critically about what they’re using.

That doesn’t mean there isn’t a risk

There is.

AI can simplify complex situations, miss important context, and present advice with a level of confidence that isn’t always deserved. It can feel reassuring without being accountable, and when it comes to emotional advice, that distinction matters.

This isn’t limited to young people. Coverage from The Guardian shows that AI tools are increasingly being used in mental health contexts more broadly, raising similar questions about trust, accuracy, and accountability across age groups.

But recognising that risk is different from assuming how students respond to it.

What schools can actually do

If students are already approaching AI with a degree of caution, the role of schools shifts slightly. It becomes less about stopping them using it, and more about helping them make sense of it.

Avoiding AI altogether is unlikely to reflect the reality of students’ lives. The more useful question is what happens after they use it.

In practice, that might look like:

- asking students to compare AI advice with advice from a friend or trusted adult

- exploring what’s missing from a response, not just what’s included

- discussing who is responsible if advice goes wrong

- creating space for students to reflect on when they would, and wouldn’t, trust it

These aren’t new conversations. They sit naturally within PSHE, RSE, and digital literacy. What’s changed is the source of the advice.

It’s not really about AI

At least, not entirely.

This is about advice - where it comes from, what makes it trustworthy, and how people decide what to do with it. Students tend to recognise that good advice involves context, lived experience, accountability, and the ability to respond to nuance.

AI can contribute to that process. It can help structure thinking or offer a starting point. But it doesn’t replace those elements, and most students seem to understand that.

A more useful place to start

So instead of asking whether students are using AI for emotional advice, it may be more helpful to ask how they are thinking about it when they do.

Because what comes through is not certainty, but movement. Students testing ideas, holding competing views, and changing their minds.

Which is exactly the kind of thinking schools are trying to build.

So where does that leave us?

AI is now part of the landscape. Sometimes helpful, sometimes limited, occasionally misleading.

The concern around it isn’t misplaced. But it may be incomplete.

If students are already questioning what they’re seeing, the job isn’t to shut that down. It’s to support it. To help them keep questioning, recognise limitations, and decide when something needs more than a response on a screen.

And perhaps, to apply that same level of scrutiny ourselves.

Because the challenge here isn’t just what young people are doing. It’s how all of us are learning to navigate advice in a world where it no longer only comes from people.

How VotesforSchools can support

If you’re looking to explore this with students, the VotesforSchools topic Would you trust emotional advice from AI? is designed to open up exactly this kind of discussion.

It doesn’t push students towards a single answer. Instead, it helps them weigh up different perspectives, question assumptions, and reflect on where AI fits alongside other sources of support.

Like many PSHE topics, the value isn’t in reaching agreement, but in giving students the space to think it through properly.

Already subscribed to VotesforSchools? Log in to download these lessons.

New here? Sign up for our 14-day free trial below!

Can't see the form? Click here to start your trial!